why we need a cybersecurity chill pill…

The foreign policy wonk blog Best Defense is making the case that we need to turn the inevitable wind-down of cybersecurity hysteria in the media after the news splash made by revelations about the Stuxnet virus, into our permanent attitudes. Basically, the media and politicians are really good at overreacting and forgetting an important issue when its cleansed out of the news cycle, however, we need a balance of both. We have to be aware but not overly paranoid that we’re going to get hit with another malicious horror that turns our machines against us. Sounds great but it’s kind of vague and cryptic. On a scale of one to ten, with one being completely calm and then being tear-your-hair-out paranoid, how freaked out should we be? A five? A three? A six? While I’m not an expert on guesstimating the appropriate panic levels about security issues, what I can add is that making the kind of malware that can strike real world targets is very, very hard, and we shouldn’t be terrified of a viral infestation of our power plants and grids because it takes a lot of time and effort to execute an attack.

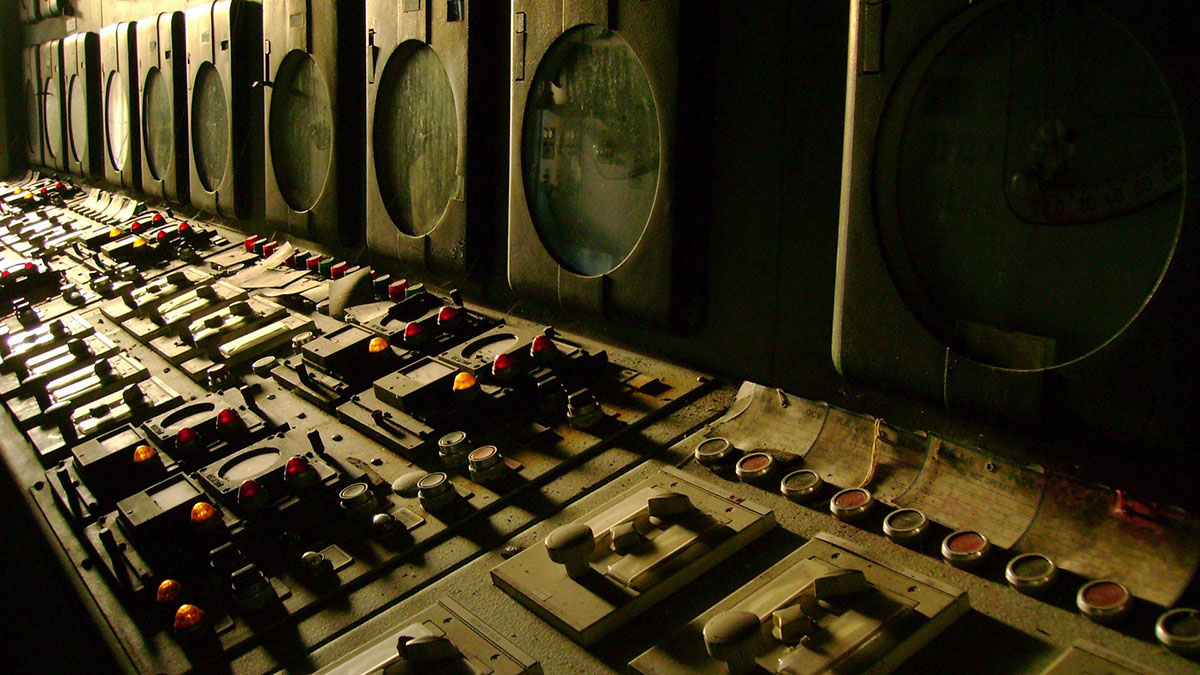

Some of the things that really set Stuxnet apart from other viruses was the fact that it targeted specific SCADA machines and showed great familiarity with Siemens Step 7 software at a very low level. And while that made it very scary, it also gives it a very limited potency. This malware is less like a cluster bomb and more like the knife of a surgeon, and like any surgical tool, it’s designed with a very specific purpose in mind. Having highly intimate familiarity with another set of software tools designed to control other SCADA machines may require exhaustive rewrites of something Stuxnet-like to the point where we’re not even dealing with the same worm, and over the time it will take to develop it, who knows what new security patches will be applied to operating systems and the targeted software? Getting a warm onto a machine isn’t such an easy task anymore. With a lot of users very aware if not paranoid about leaving strange files in their junk mail filter and warnings that will pop up every time something potentially compromising happens from operating systems, you have to rely on escalation of privileges attacks and the users’ own bad judgment, hence the prevalence of phishing and a bit more elaborate spear phishing attacks to circumvent passwords and user permission managers.

Now, there are obviously other ways of getting into systems which rely on lax security when it comes to a wi-fi connection or just physically spying on what people are doing to get a password or plug in a USB with a viral payload, but the point is that hacking into systems today is like trying to hit a moving target. It’s not a trivial task if you encounter even a modicum of what’s considered basic security nowadays and the users faithfully keep their machines updated. But that said, industrial machinery is actually updated very infrequently because if it’s working, applying an update carries what seems like an unnecessary risk. Even the most reliable vendor will eventually stumble and something will go wrong. So why take a chance, right? That leaves SCADA machines which haven’t been patched exposed for a very long time, giving potential attackers a long time window during which they can get very familiar with how the machines work, how they communicate with the software, and at what events communication and be seamlessly altered and sent back to the machinery, triggering it to miss a crucial cycle or exceed some acceptable bound. So essentially, another Stuxnet is possible and big industrial machinery is a likely target, but the next worm will take a while to develop, will target a specific system, and we can thwart it with regular updates and maintaining redundant systems and good security protocols.