Exploring bleeding edge experiments, oddities, new and bizarre dicoveries, and fact-checking conspiracy theories since 2008. No question is out of bounds and no topic is too strange for a deep dive.

# science

Is time travel possible? What would happen if you did it? How would you do it? The answers to these questions are surprisingly complicated and weird.

# science

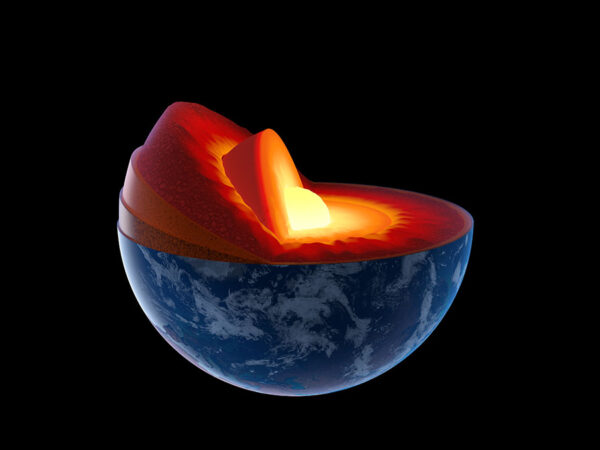

According to the news, our planet’s core has stopped spinning and will soon reverse. What’s really happening is a lot less exciting, but still pretty neat.

# science

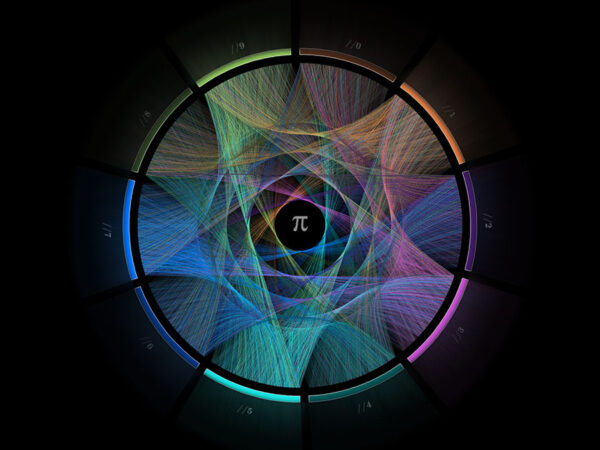

Stephen Wolfram joins a long line of theoreticians who believe they uncovered how the universe really works. He, like all of them, is almost certainly wrong.

# oddities

Flat Earthers don't seem to realize that their theory violates basic laws of physics.

# science

Tractor beams aren't just for aliens cruising Earth and looking for people to abduct anymore. In fact, you can build one yourself.

# science

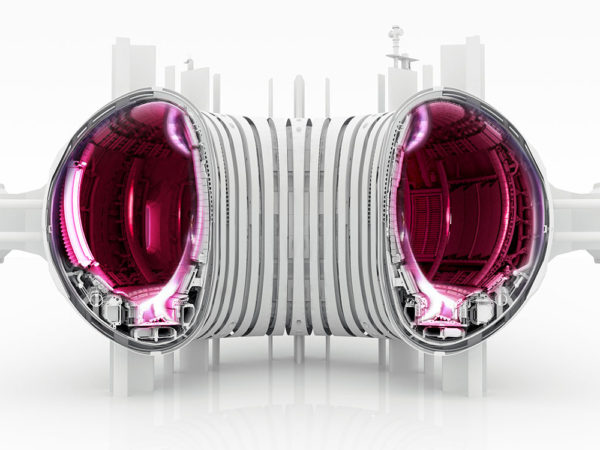

We have a new design for a fusion reactor that's showing great promise. We owe it to ourselves to give it a fair shot at making fusion work.

# science

On further review, the study claiming that the universe is expanding at a steady rate ended up independently proving accelerating expansion.

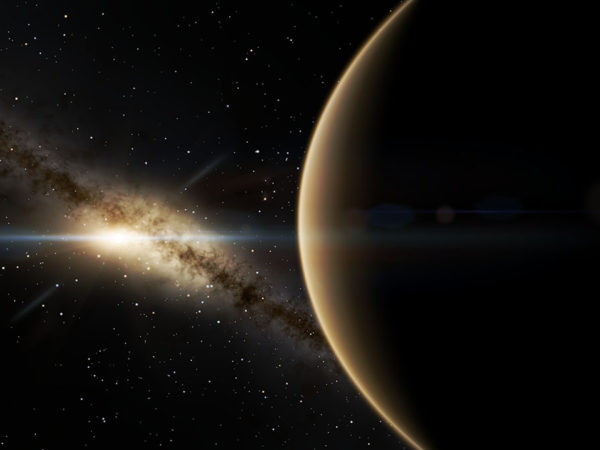

# space

While studying mysterious fast radio bursts, astronomers figured out why the universe weighs as much as it does and where that mass went.

# science

For the EmDrive and similar schemes to work would require radically different laws of physics. But something as trivial as physical impossibility isn't deterring its advocates...

# science

For an instant spacesuit, just add plasma or electrons. But don't stay in it for too long...