Exploring bleeding edge experiments, oddities, new and bizarre dicoveries, and fact-checking conspiracy theories since 2008. No question is out of bounds and no topic is too strange for a deep dive.

# tech

Quantum computers are coming. But what exactly are they and what can they do for us?

# science

It turns out that some quantum systems don't want to decay into their most chaotic state. Instead, they want to return to the state in which they started.

# space

The late physicist Stephen Hawking predicted that black holes evaporate as its massive tidal forces interfere with quantum particles. Now, experiments are starting to confirm his predictions.

# science

If you think you know how gravity works, you’re probably wrong. While we can explain what it does, figuring out how has been a multi-decade long exercise in frustration and dead ends.

# science

The most thorough study of quantum entanglement to date shows that "spooky action" is really happening.

# science

Stephen Hawking hasn't actually solved the black hole information paradox and his latest work raises more questions than it provides answers.

# science

When you're trying to start carbon-based life, not just any carbon isotope will do and the one you need wouldn't even exist without a quirk of quantum mechanics.

# science

Raymond Tallis has a list of scathing criticisms aimed at physics and neurology. It would sure help his points if he knew what he was talking about...

# science

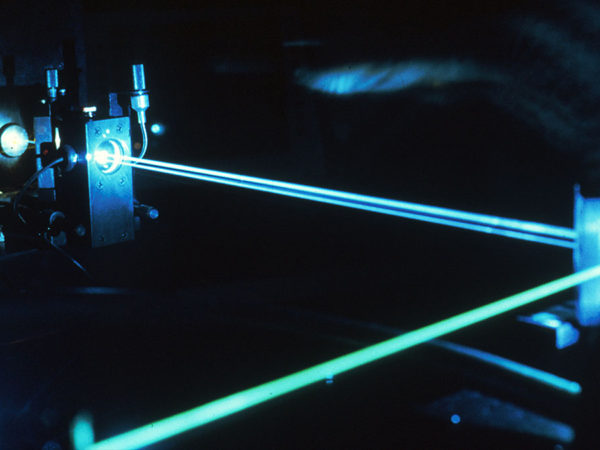

A new experiment unlocks quantum behaviors that can make laser beams more cohesive and negotiate more complicated trajectories.

# space

We just found the Higgs boson, and already a few scientists think it might be responsible for destroying the universe on a random day in the unspecified future.