when science fiction and computer science meet

Science fiction writer Ted Chiang did a good deal of research into artificial intelligence, particularly the kind of general knowledge, omni-AI system which I’ve been labeling completely uneconomical whenever I mention it in any practical context. And in a post about his inspiration on the subject, he outlines exactly why a custom, learning, adaptive artificial intelligence system designed to do anything and everything is bound to be grossly impractical, not just from a philosophical standpoint, but from a logistical point of view as well. It takes far too long to actually build it, then train it to do whatever it is you want to do. Considering that even humans can’t do everything and at some point in time we need to specialize in a rather narrow area of skill and expertise, you’d have to devote decades upon decades of training your fantastic machine to do something really impressive.

Teaching machines is really nothing new, and there are plenty of ways to get robots and computers to make the decisions you need them to make, at least for problems involving things computers are built to do, things like building complex probabilistic models and crunching numbers. But when it comes to things humans can do as organisms, computers tend to sputter. Without the mechanism to learn very quickly through a repeated pattern of trial and error in each area they try to master, they may find a way to move around in a lab maze, but not so much in the real world, where they deal with new stimuli and interference they simply weren’t designed to work around since it’s so common sense to us, we forget to account for them. As Chiang summarizes…

[N]avigating the real world is not a problem that can be solved by simply using faster processors and more memory. There’s more and more evidence that if we want AI to have common sense, it will have to develop it in the same ways that children: by imitating others, by trying different things and seeing what works, and most of all by accruing experience. This means that creating a useful AI won’t just be a matter of programming, and although some amazing advances in software will definitely be required; it will also involve many years of training. And the more useful you want it to be, the longer the training will take.

That’s pretty much spot on, with an added bonus of noting that simply speeding up training sessions isn’t an approach we could take with general artificial intelligence. Though he’s wrong that we’re not even close to the kind of robot that could walk into the kitchen and make you eggs in the morning (because we already have a few that fetch beer on command), and his reasons behind why speeding up trials wouldn’t work have several major problems (we can’t compare processors to neurological limits of our bodies), his initial statement is a valid one. Trials in the real world take a certain amount of time and you have to be thorough to train a robot to do what you need it to do. The experiment has to be set up, the code compiled after the latest tweak, and the execution itself has to take a certain amount of time. Afterwards, you have to do an analysis of what went right and what went wrong, tweak the code, debug and re-compile it, re-set your experiment, and so on.

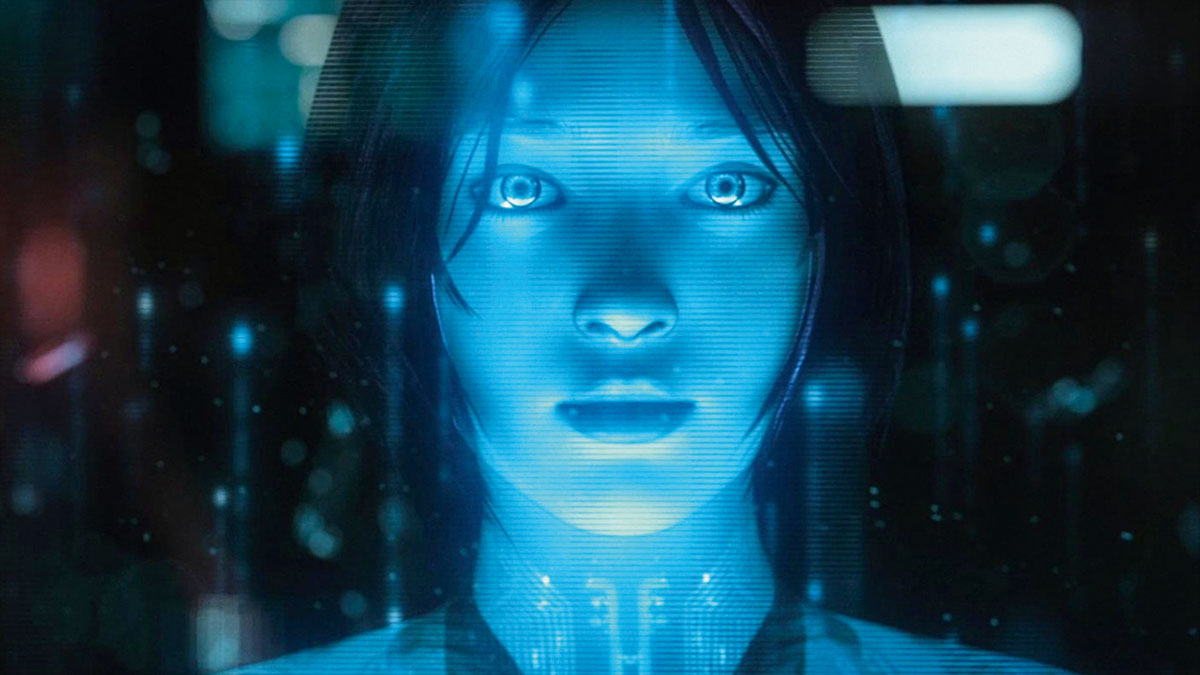

And all this is costing some very serious cash. While you spend decades whipping your AI into shape, who’s to say that your funding won’t be cut in another financial disaster? What happens if people who originally built the system leave to do other things? Who’s going to be in charge of all this general training that will last more than some people’s entire careers? It’s much easier and cost-effective to build specialized intelligent agents which are trained to do a few specific tasks quickly and extremely well. Then, maybe at some point we could combine them into something impressive, bringing together mobile system, rules-based and probabilistic AI, and natural speech recognition software to help us process huge reams of complex data on the fly, but even there, our hypothetical homunculus would have to be trained to focus on specific tasks rather than try to be an omni-app that needs non-stop training to keep up with the humans around it.

[ story via John Dupuis ]