dissecting the one supercomputer to rule them all

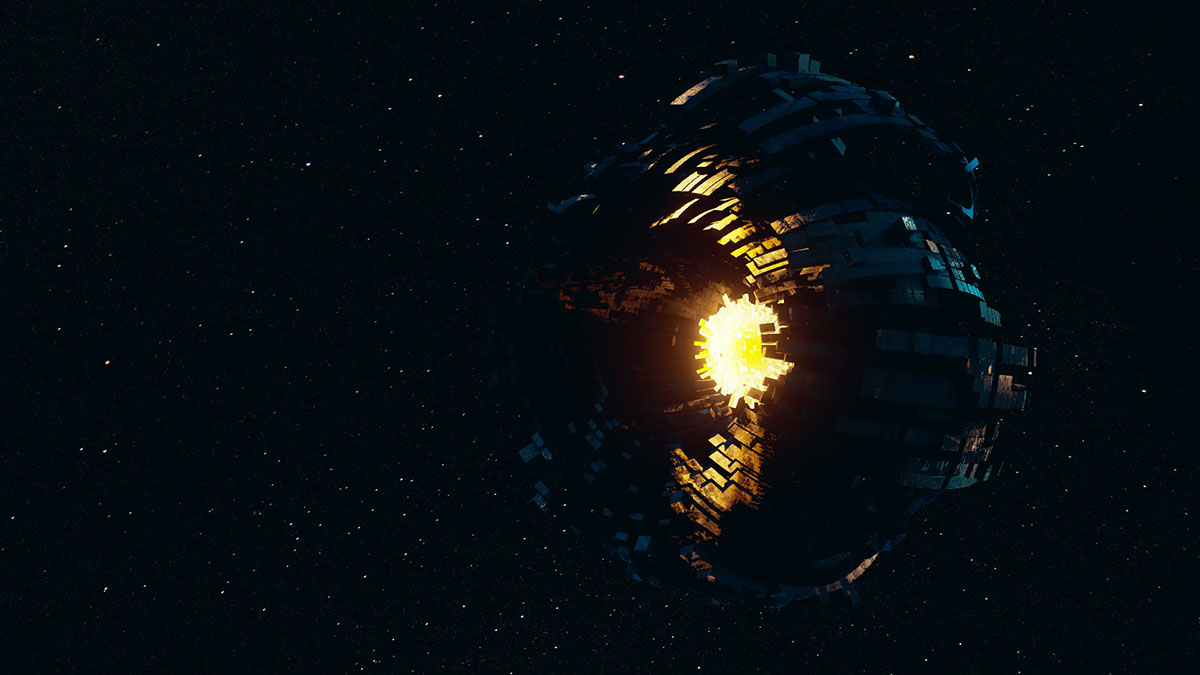

Computer scientist Robert Bradbury had an idea. Imagine a swarm of satellites or a megastructure built to harness the energy of an entire star, essentially a Dyson shell. Now imagine that it transmits its excess energy to another, similar construct wrapped around it, and this layer does the same, and so on until the farthest structure somewhere in the vicinity of Neptune absorbs whatever is left. Unlike typical Dyson shells though, they don’t just soak up the energy of the star inside them, they’ll use all this power to run the kind of programs that would make our biggest supercomputers seem like overgrown abacuses by comparison.

He called this structure a Matreshka Brain, from the concept of a матрёшка, the famous Russian nesting dolls created in 1890 by an artisan and a painter inspired by trips to Japan, and he envisioned that its powers could be almost godlike, especially if it would be made of hypothetical material that allow for peak processing speeds. And while he envisioned more or less melting down a planet to extract enough silicon for the task, we now know something similar can be done with lasers and very specialized composite materials.

However it will work, the bottom line is that a computer stretching from Mercury to Neptune would be hard at work, calculating answers to problems as fast as the laws of physics allow. Of course the big question is what exactly it would be calculating and sci-fi writers have envisioned everything from uploading organic minds into a virtual reality to simulating an entire universe, to manipulating the fabric of time and space itself. All of this might be possible — except the mind uploading part that is — but with the benefit of decades of new research, the idea of a solar system sized supercomputer may be overdue for an update.

First and foremost, space is full of all sorts of high speed debris and a giant megastructure with several layers is an enormous target that would have to be very heavily defended. Asteroids and comets from the star’s equivalent of an Oort Cloud or flybys from alien solar systems could do a lot of damage to such a machine over just a few years and the Brain would have to be a nimble shapeshifter or effectively invulnerable to cope with mountains of rock and metal flying faster than bullets in the not insignificant region of space it occupies.

Secondly, its design might be maximized for computational speed and low latency, but today we know that it’s not just how fast something is computed that matters, but how. Some algorithms can only be done with binary calculations, others are best left to quantum circuits, yet others are perfect for hardware that mimics artificial neural networks, and some problems we’d expect a very advanced spacefaring species to encounter should probably be broken up into steps that are either parallelized or distributed among these different kinds of circuits and re-assembled into the required output, kind of like Shor’s algorithm, but more complex and on much grander scales.

While it’s possible that the shells of the Matreshka Brain can easily accommodate all these different circuits and architectures with hypervisors keeping track of how calculations will be executed and where, much in the same way data centers work today, this will necessitate a fair bit of overhead on what is essentially a single giant computer. Its primary architecture would resemble today’s internet with edge nodes and complex routing deciding where and how each request is executed. And at that point, why not just decentralize the system, especially since it would have to be made of separate satellites to remain in a stable orbit around a star unlike solid shells that wouldn’t be gravitationally bound to it or each other?

And that brings us to our third issue. A hypercomputer that large means a species is putting all of its computational eggs in one basket. If something happens to it like a system crash, where and what is the backup? Distributed, redundant systems are more reliable and easier to use, especially if they sync data from each node for easy access. If one goes down, another is easily available. In fact, a far better iteration of a Matreshka Brain can be found in the Culture novels of the late great Iain M. Banks who envisioned a vast network of spacefaring AIs sharing their knowledge and storing complete backups of themselves and the living things with which they interacted. (As a bonus, his descriptions of how an AI would think are spot on.)

The bottom line here is that Matreshka Brains are interesting concepts to consider but with our newfound experience in running large computer networks on a scale far smaller than any one of these structures and already running into problems requiring the creation of distributed systems, tells us that they’re not very practical. We can achieve similar computing prowess more reliably and without heavily investing in megastructures meant primarily for doing a lot of math, and if there’s a sci-fi idea whose blueprint we should follow, its that of the Culture and its sentient AI ships which exist in symbiosis with its humanoid partners.