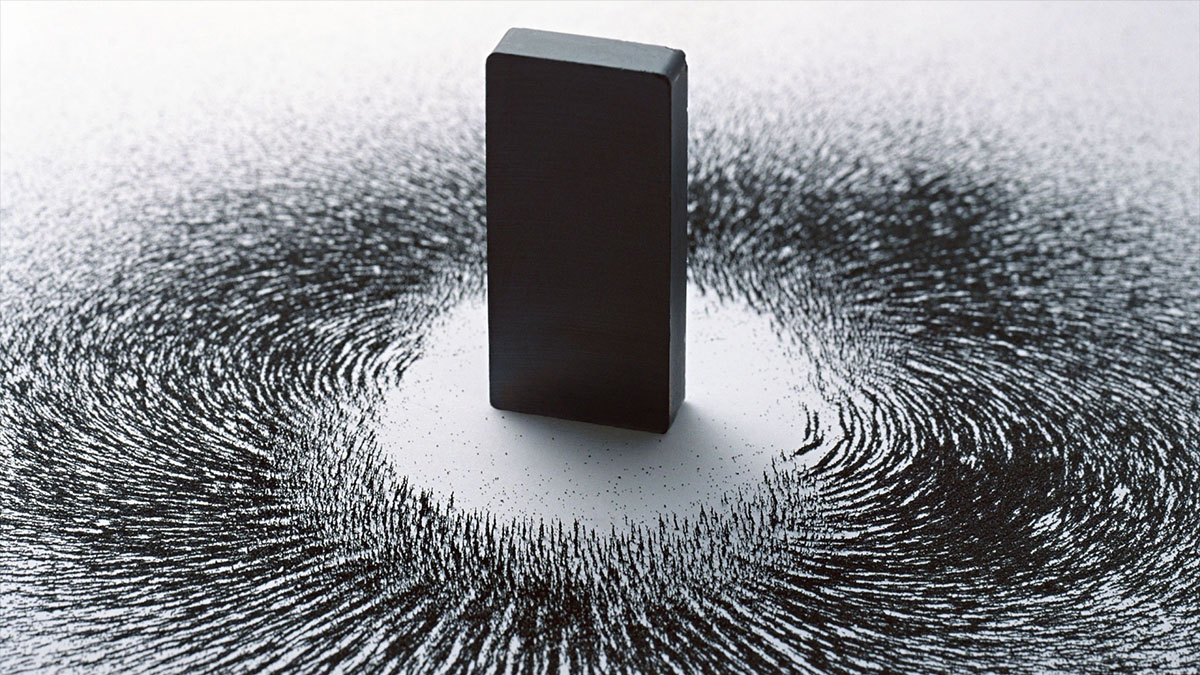

to boost data density, chill and apply magnetism

The good news is that we found a way to drastically increase storage density for computers. The bad news is that it's not very practical.

Chances are, your computer’s current hard drive can store around 500 GB, and if you’re a real video editing or graphics enthusiast, you either bought yourself, or customized your computer to have a 1 TB drive. But what if in the same space that your hard drive takes up now, you could host a multi-PB (petabyte) drive, enough to run your own little data center from the comfort of home? Impossible, right? Today’s common storage methods in no way allow for that sort of energy density. Why to make that happen, you’d need to store your information on an atomic scale but individual atoms are too unstable to retain electronic information, switching their charges hundreds of times per second and losing your data in the process.

Physics wagged its finger at engineers to keep their ambitions for increasing data density away from the quantum world. In reply, they performed a very nifty experiment and created a 96 atom per byte drive, aligning 12 counter-rotating iron atoms to store one bit and lining these dozen atom blocks side by side into a full byte. Just to show that this storage method works, they stored individual letters of the word “think” in their ASCII codes, alternating the values one after another.

Turns out that a block of a dozen iron atoms separated by nitrogen can retain an imparted charge for hours at a time, enough to read and write the binary data we use to encode text, sound, images, and programs. But of course there are catches. While the iron atom cell does hold on to the charge, its entropy is too great to use in practical hardware. You can only read and write the data using a scanning tunneling microscope, which is not the kind of technology one could efficiently miniaturize to work with hundreds of megabytes per second. And a final and very important catch: the whole thing only works at -450 °F or -268 °C, awfully close to absolute zero, and at a similar operational temperature to a prototype of quantum transistors.

This means you can forget about having this diminutive architecture replace today’s massive data centers. The whole setup is too energy intensive, expensive, slow, and unstable. However, it does show that data can be more or less stably held at surprisingly high densities. After all, just a dozen iron atoms took the loss of stored data from one millisecond to several hours. Today, we use something like a million atoms to safely store one bit, so if we can cut it down to hundreds of thousands, if not tens of thousands, we can radically boost data densities on hard drives.

The estimates of hundredfold density increases on some popular science blogs seem overblown, especially when we consider the overhead, but increasing density by a more realistic factor of 10 by the time it’s ready for practical application would be great. Keep in mind that as neat as these dozen iron atoms may be, they were never intended to be a practical method of data storage. The whole point was to see just how small we could go until we start seeing stable charge retention. When we can store the experimental data for a few years, we will know that we have a valid technique that could be used by a new generation of mass produced solid state drives, which will hopefully last more than a year at a time.

And there’s a curious little tangent that hasn’t been mentioned by the media so far, at least to my knowledge. Being able to store information for several hours on devices using extremely small data densities could also be useful for boosting RAM, which would be a major performance enhancement. To get really technical for a moment, you can already populate gigabytes of RAM with data from a hard drive of some sort and keep it perpetually alive as a way to reduce the amount of reads and writes on a solid state drive, increasing its lifespan since it’s the I/O operations that stress it most.

All that also means you’ll have much faster access to your largest data files, just screaming along by avoiding the need to find and reassemble a file usually scattered all across your drives before using it. Since we won’t need RAM to be stable for years, it can use smaller and less stable storage structures in the future. However, we still need to make sure that such structures can be read quickly and efficiently, and will not require that you run them during the Arctic winter to keep them cold enough to retain anything. I do not care how fast that setup would be because not even for the fastest computer on Earth will I put on a parka and travel by snowmobile or dog sled up to the North Pole to write code in a tent, surrounded by polar bears.

Yes, considering that the iron atom structure we’re discussing would have to be operated on the surface of Triton this would be a significant improvement, but not nearly enough to survive the icy customer reviews. Now if we were serious for a second, we could imagine a future supercomputer plugged into a lab super-chilling hyper-dense RAM to run complex and data-intensive calculations faster, though it seems dubious that it would be a major goal for the IBM team behind this project. Their bosses know very well that supercomputing is a niche business at best…

See: Loth, S., Baumann, S., Lutz, C., Eigler, D., Heinrich, A. (2012) Bistability in Atomic-Scale Antiferromagnets Science, 335 (6065), 196–199 DOI: 10.1126/science.1214131