how would the nsa surf a tsunami of data?

The NSA wants to scan the internet in real time and store whatever it can catch for later. But are they ready for the challenge that poses?

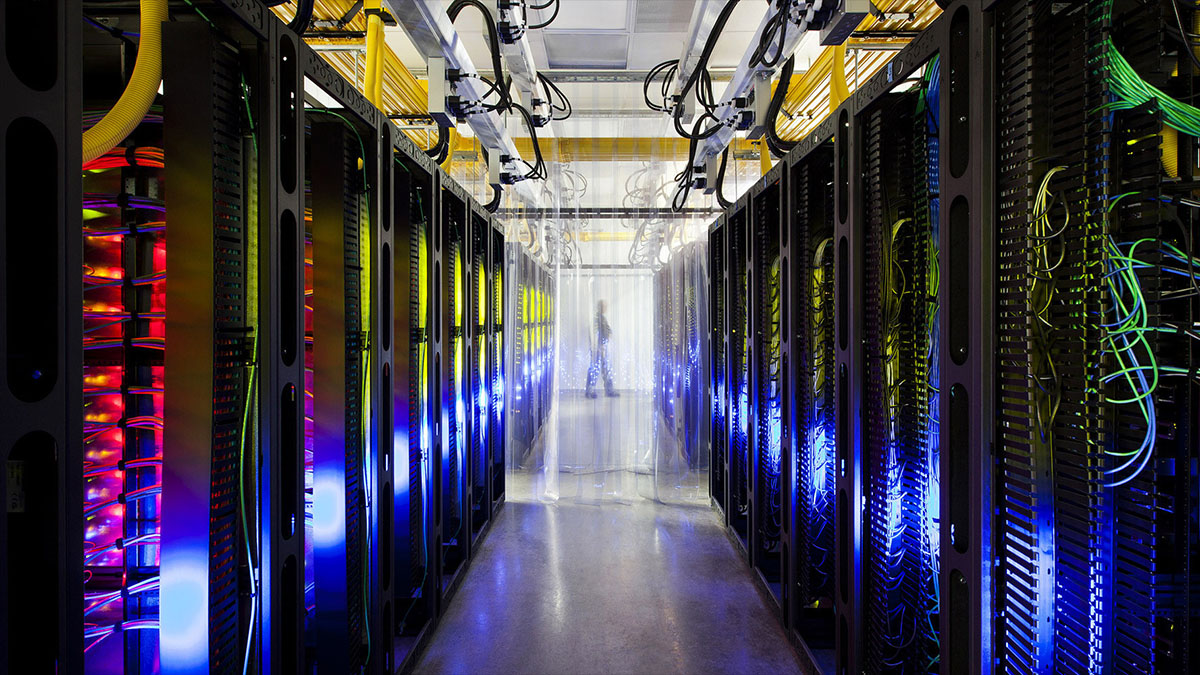

By now you’re probably getting a little sick of computing posts but please do indulge me in just one more, this time, with a national security twist. If you read Wired, you’ve probably seen their piece about a sprawling NSA data center being built in Utah with the supposed purpose of collecting and storing petabytes of every phone call, e-mail, website view, text, and IM that passes through the United States’ communication infrastructure. It really is an impressive undertaking in size, scope, and expense but I’m curious whether having all the data at its disposal will actually give the NSA what it wants.

What good is being able to collect immense quantities of raw data and storing it for years when you then have to keep supercomputers busy for years looking for just a few tidbits of useful info in an ocean of meaningless and irrelevant bits and bytes? Casting a dragnet over the entire world can certainly be done thanks to the constantly connected nature of our modern devices, but would the end result really be manageable? An analyst doesn’t need two terabytes of e-mails sent last week to give something actionable to her bosses. She needs just one e-mail that helps unravel a legitimate concern. Just flooding her with lots of data won’t make her more productive or efficient by and stretch of the imagination.

Here’s the problem with analyzing a torrent of freeform data. You can’t reliably flag it for further processing and when you’re an agency tasked with staying paranoid and have endless reams of data to scour for any sign of danger or conspiracy, you have a recipe for creating countless false positives. Sure you can filter the data you have into strings and parse them easily enough. Considering that the NSA will run this on supercomputers, it would be safe to assume that they’ll use a version of C++ to write their parsers (since it’s fairly fast and can be pretty easily compiled on a Linux variant), and all they’d need to do is call the string::find method on each bit of text they get with every one of their key phrases or words.

Sounds simple enough, right? And it is. But it would also catch absolutely everything that has these key phrases and return way too many potential hits. Or none if a bad key phrase was chosen or the potential targets are using innocuous code words. One could try to teach a computer how to find unusual words combinations based on an analysis of millions of ordinary messages by a rather straightforwardly implemented naive Bayes classifier but that would involve a longitudinal study of how e-mails are written. Considering typos, grammatical errors, inside jokes, and link URLs, there’s really no guarantee of reliable or accurate results. And spam? I don’t even want to think of how to handle that.

We could approach this from another angle and say that the NSA would have a vast repository of data to study when it finds evidence pointing to a specific person or group, able to sift through terabytes of data to find all of their e-mails, phone calls, and encrypted communications going back years. Having a concrete target yields a lot more actionable information. And what’s even more important is their reported effort to break through most encryptions with a supercomputer designed for brute force attacks against 128 bit keys. This isn’t a new or an unexpected development by any means because smaller keys have been cracked by sheer computing might in the recent past and security experts have already put an expiration date on 128 bit encryption.

But it’ll take a lot of time and effort to be able to read encrypted data and the amount of time it takes to decrypt it might mean that the actionable intelligence it contains would be no longer relevant or leave the agency trying to catch up to its targets, always a step or two behind because it takes so long to decrypt what it intercepts. This is what will happen when you run out of easy problems to solve when it comes to dealing with a lot of unsorted, freeform data coming at you like a tsunami. Every somewhat plausible solution will involve something very exotic, very large, complex, and expensive, and very difficult to judge on efficacy before actually trying it out.