Exploring bleeding edge experiments, oddities, new and bizarre dicoveries, and fact-checking conspiracy theories since 2008. No question is out of bounds and no topic is too strange for a deep dive.

# space

Neutron stars’ thunder is usually stolen by black holes, but these bizarre objects living on the edge of physics create plenty of fascinating phenomena all on their own.

# tech

Thanks to social media, everybody can be a pundit today, and that’s ruining how we build a factual understanding of our world and what’s happening in it.

# politics

Many of us are living in a dystopian future, but realizing that fact and making changes to fix it are much more difficult than it sounds.

# politics

Organized skepticism was once covered in every major publication around the world. Why did it seemingly vanish overnight? And what damage did its implosion leave behind?

# podcast

Despite the lingering obsession with IQ scores being the arbiter of your fate and intelligence, they’re probably pretty useless in the grand scheme of things.

# podcast

QAnon and social media conspiracy theorists found the cure for literally everything. It’s called a med bed, and it’s straight out of science fiction. Literally.

# podcast

If you’re wondering when artificial intelligence will start destroying the world as we know it, wonder no more. That time is now.

# podcast

With the crypto market having cooled off after a very long series of high-profile busts, crashed, and rug pulls, we take another look at the next big thing that never was: Web3.

# tech

Hollywood writers are once again on strike. Their goal? Nothing less than figuring out how humans and AI can coexist with runaway late-stage capitalism.

# tech

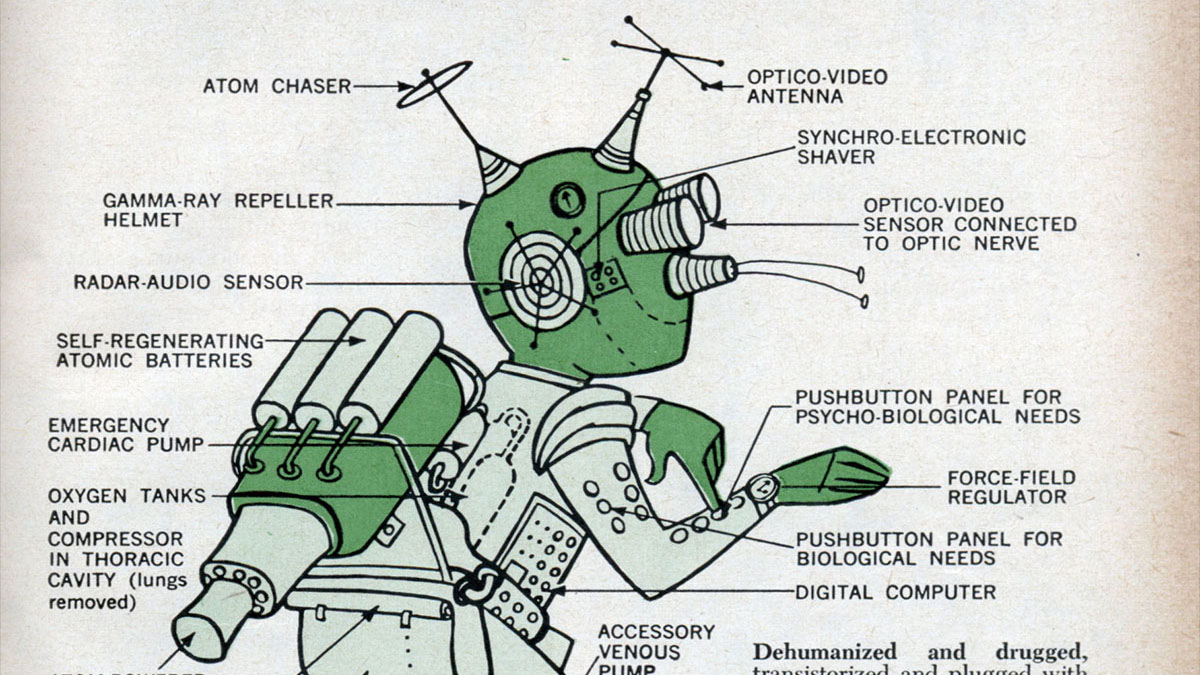

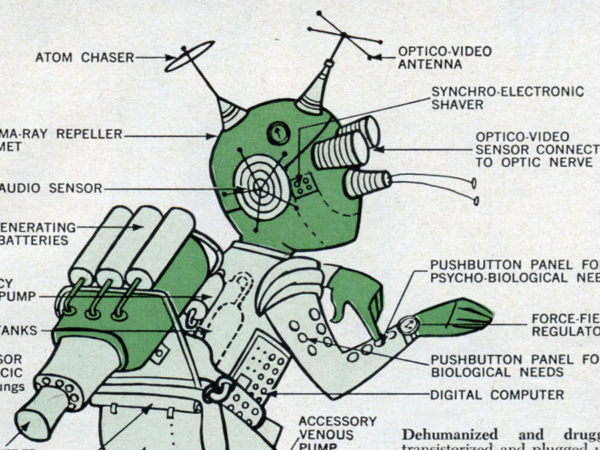

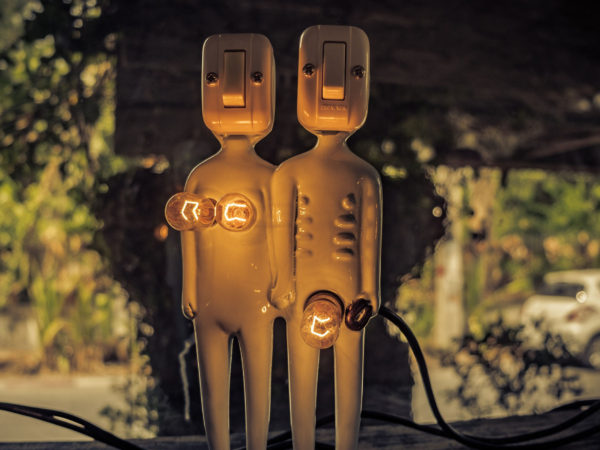

Our nearly inevitable cyborg overlords may have a lot more squish and atomic scale wiring than we were taught to expect by science fiction.

# space

Scientists figured out an ingenious recipe for extra strong concrete perfect for habitats on the Moon, Mars, and beyond.

# science

New research into the psychological roots of greed shows that greed may mean access to more money and luxuries, but at the cost of damaging literally everything else.