Exploring bleeding edge experiments, oddities, new and bizarre dicoveries, and fact-checking conspiracy theories since 2008. No question is out of bounds and no topic is too strange for a deep dive.

# podcast

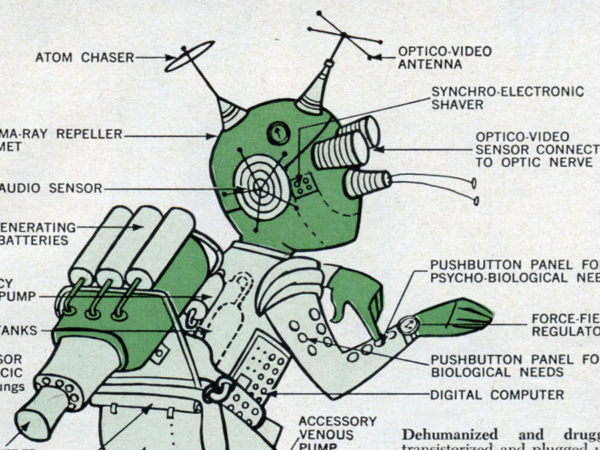

On a very special extended episode, we talk about the science of transhumanism, cyberpunk, extreme life extension, and our potential future merger with machines.

# tech

Code storage and sharing platform GitHub wants to archive open source software for up to 10,000 years. But is that a good idea, and could we use its momentum to rethink how and why we code?

# tech

As we learn more about how we evolved and accelerate our technical know-how, some are wondering if we can use science and technology to overcome our limitations.

# tech

Futurists once dreamed of an automated, leisurely utopia. Our failure to live up to their visions is taking a massive toll on our health and politics.

# science

Like warp drives, suspended animation, and teleportation, antimatter is a frequent staple of science fiction. But what exactly is it, and could we ever use it to do amazing things?

# science

Humans have been thinking about modifying themselves to survive the rigors of space flight for a long time now. Thankfully, out ideas for how to do it have vastly improved.

# tech

Elon Musk's startup is raising money to protect humanity from runaway adoption of artificial intelligence by merging our minds with machines. Here's how it could succeed.

# podcast

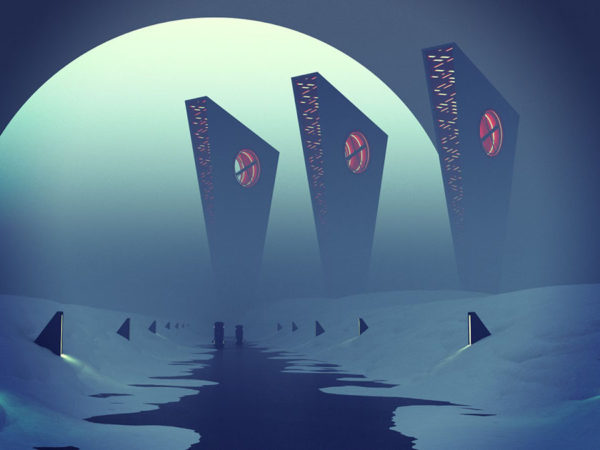

Of all the worlds to which humanity may travel, the most typical one would require our colonists to live under a sky in which the sun never rises or sets because it physically can’t.

# sci-fi saturday

Each installment of Sci-Fi Saturday and attempt at introducing science fiction at Weird Things was given a very positive reception, so the experiment is expanding. Here’s how and why.

# podcast

Humans have spent millenia looking for a fountain of youth or a recipe for immortality. Why have we been failing? And is there anything we can do to make death optional?