Exploring bleeding edge experiments, oddities, new and bizarre dicoveries, and fact-checking conspiracy theories since 2008. No question is out of bounds and no topic is too strange for a deep dive.

# education

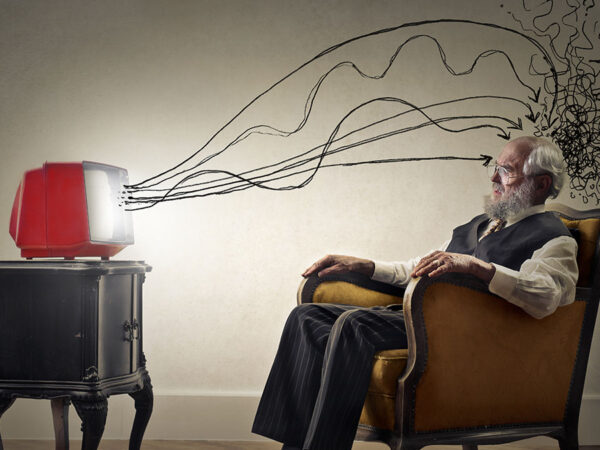

Today’s media is addicted to contrarian takes on everything. And those takes are almost always just attention-grabbing clickbait.

# health

Joe Rogan and other prominent anti-science mouthpieces are now trying to pull off an old conspiracy theory Jedi mind trick when discussing the pandemic.

# science

When science, the media, and short-sighted metrics meet, the end result is often long on flash and short on facts.

# politics

I tried writing about politics for a year and a half. My experience was eye-opening in the worst possible ways...

# science

Scientists search for truth, not "balance" to appease partisans who already hate them anyway. If they get involved in politics, they shouldn't change their attitudes.

# tech

If you thought fake news were bad, welcome to the hell that is fake fact checking.

# oddities

Alex Jones' deepest, darkest fear isn't reptilian aliens. It's experts and wonks with who cramp his and his fans' style.

# tech

The start of a new experiment for a new era of blogging.

# tech

Amazon is not a nice or fun place to work, even if you're one of its supposedly vaunted techies. But it's not like we expect any better from a company that big.

# astrobiology

Nick Redfern’s publishers really didn't like having his theory debunked...